1

answer

0

watching

610

views

10 Nov 2019

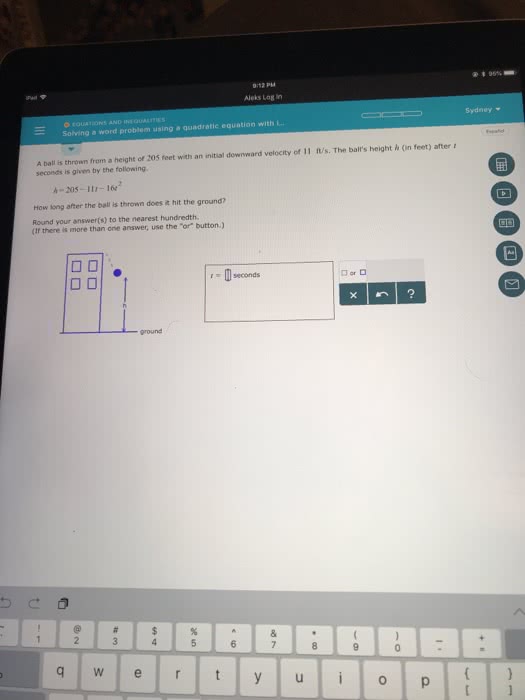

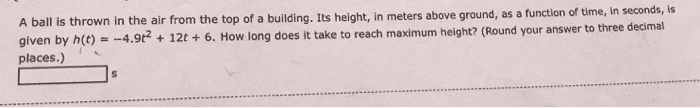

a ball is thrown upward with an initial velocity of 35 meters per second from a cliff that is 90 meters high. the height of the ball is given by the quadratic equation h=- 4.9t2+35t+90. where h is in meters and t is the time in seconds since the ball was thrown. find the time it takes the ball to hit the ground. round your answer to the nearest tenth of a second.

a ball is thrown upward with an initial velocity of 35 meters per second from a cliff that is 90 meters high. the height of the ball is given by the quadratic equation h=- 4.9t2+35t+90. where h is in meters and t is the time in seconds since the ball was thrown. find the time it takes the ball to hit the ground. round your answer to the nearest tenth of a second.

Irving HeathcoteLv2

2 Apr 2019