MATH 220 final: Stanford University Maths Final Examination Question ans Solutions

Mathematics Department Stanford University

Math 51H Final Examination, December 7, 2015

Solutions

Unless otherwise indicated, you can use results

covered in lecture and homework, provided they are clearly stated.

If necessary, continue solutions on backs of pages

Note: work sheets are provided for your convenience, but will not be graded

Question 7 is extra credit only! Work on it only if you are done with the other problems!

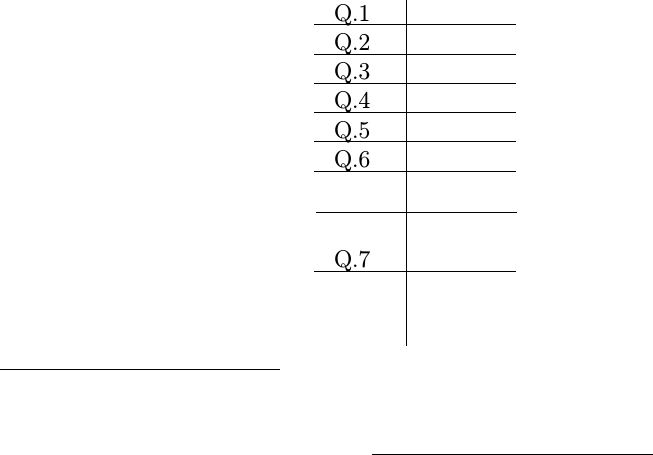

Q.1

Q.2

Q.3

Q.4

Q.5

Q.6

T/35

Q.7

Name (Print Clearly):

I understand and accept the provisions of the honor code (Signed)

Name: Page 1/8

1(a) (3 points): Find (with detailed proof!) det A, det Band det(AB) if

A=

0 0 1 2

0 0 2 1

0 3 0 0

2 0 0 0

, B =

1 2 3 4

0 1 2 3

0 0 1 2

0 0 0 1

.

Solution: The determinant of a matrix Cwith entries cij is det C=Pσ∈Sn(sign σ)cσ11. . . cσnn,

where the sum is over permutations of {1, . . . , n}. This means that each summand takes one

entry from each column of C, and the entries corresponding to different columns must come

from different rows. In particular, in the case of Athere are only two non-zero summands

when we take into account that the product vanishes if any factor vanishes. Indeed, the

only non-trivial summands are those with σsatisfying σ1= 4, σ2= 3, and either σ3= 1

in which case σ4= 2, or σ3= 2 in which case σ4= 1. The sign of the first permutation,

(4,3,1,2) is −1, as the number of inversions is 2 + 2 + 1, and that of the second, (4,3,2,1)

is 1 as the number of inversions is 3 + 2 + 1. Thus,

det A=−2·3·1·1 + 2 ·3·2·2 = 6 ·3 = 18.

Since Bis upper triangular, the only non-trivial summand corresponds to the identity

permutation (1,2,3,4) (namely we need σk≤kfor all k, but the injectivity of σmeans

σk=kin this case), which has no inversions, so has sign 1, and thus det B= +1·1·1·1 = 1.

Since det(AB) = det(A) det(B) for all matrices A, B, in this case we have det(AB) = 18 as

well.

(b) (3 points) Suppose A:V→Wis linear where V, W are finite dimensional real vector

spaces. Let N(A) = {x∈V:Ax = 0}and R(A) = {Ax :x∈V} ⊂ W. Show that

dim N(A) + dim R(A) = dim V.

Note: If you want, you may use matrices, but be specific about the correspondence between matrices and

operators. Also, this problem works over any field.

Solution: First, N(A) is a subspace of V, and Vis finite dimensional, so there is a

basis e1, . . . , ekof N(A) (with possibly k= 0), and this can be extended to a basis

e1, . . . , ek, ek+1, . . . , enof V. We claim that Aek+1, . . . Aenis a basis of R(A), which will

finish the problem since in this case dim R(A) = n−k, dim N(A) = k, dim V=n.

First, Aek+1, . . . Aenspan R(A) because any element yof R(A) is of the form y=Ax =

APn

j=1 cjej(using that e1, . . . , enis a basis of V), thus y=Pn

j=1 cjAej=Pn

j=k+1 cjAej,

where the penultimate step used ej∈N(A) for j≤k, so Aej= 0. Thus, Aek+1, . . . Aen

span R(A).

On the other hand, Aek+1, . . . Aenare linearly independent for if Pn

j=k+1 cjAej= 0 then

0 = APn

j=k+1 cjej, so Pn

j=k+1 cjej∈N(A), so Pn

j=k+1 cjej=Pk

j=1 djej, so Pn

j=1 cjej= 0

if we let cj=−djfor j≤k. But e1, . . . , enare linearly independent by construction,

so cj= 0 for all j, and thus we conclude that Aek+1, . . . Aenare linearly independent,

hence they give a basis for R(A), proving the claim, and thus completing the proof of

dim N(A) + dim R(A) = dim V.

Name: Page 2/8

2 (a) (21

2points): Suppose that f:Rn→Rand let abe a given point of Rn. Give the

proof that if there is ρ > 0 such that the partial derivatives Djf(x), j = 1, . . . , n exist for

kx−ak< ρ and are continuous at a, then fis differentiable at a.

Solution: As in lecture let h= (h1, . . . , hn)Tand define hj= (h1, . . . , hj,0,...,0) for

j= 1, . . . , n, and h0= 0. Then

f(a+h)−f(a) =

n

X

j=1

(f(a+hj)−f(a+hj−1)) =

n

X

j=1

hjDjf(hj−1+θjhjej)

for some θj∈(0,1) by the mean-value theorem from 1-variable calculus. Thus for 0 <

khk< ρ we have

kf(a+h)−f(a)−

n

X

j=1

hjDjf(a)k=k

n

X

j=1

hj

khk(Djf(a+hj−1+θjhjej)−Djf(a))k

≤

n

X

j=1 k(Djf(a+hj−1+θjhjej)−Djf(a))k → 0

as h→0because Djf(x) is continuous at x=a.

(b) (31

2points): State (without proof) the Lagrange multiplier theorem, and use it (to-

gether with any other theorems from lecture that you need) to find a point where the

function xy +z3takes its maximum subject to the constraint that x4+y4+z4= 1, and

justify your answer.

Note: Your discussion should include the reason that the maximum exists.

Solution: The Lagrange multiplier theorem states that if g1, . . . , gkare C1functions on

U⊂Rnopen, with linearly independent differentials on their joint zero set S={x∈U:

g1(x) = . . . =gk(x) = 0}then at any critical point xof f|Sfor any C1function f:U→Rn,

there exists λj∈R,j= 1, . . . , k, such that Df (x) = Pk

j=1 λjDgj(x).

Let g(x, y, z) = x4+y4+z4−1 defined on R3, and let S={(x, y, z) : g(x, y, z) = 0}. If

(x, y, z)∈S, then x4+y4+z4= 1 shows that x4, y4, z4≤1 since all summands are non-

negative. Thus, |x|,|y|,|z| ≤ 1, so Sis bounded. On the other hand, the map gis C∞, so in

particular continuous, so g−1({0}) = Sis closed since {0} ⊂ Ris closed. Correspondingly

Sis compact (as it is closed and bounded), so any continuous function, such as f|S, attains

its maximum and minimum on S.

We have gis C∞and Dg(x, y, z) = (4x3,4y3,4z3), so the vanishing of Dg means x=

y=z= 0, and thus Dg does not vanish on S. Correspondingly, by the implicit function

theorem, Sis a C∞submanifold of R3. Further, any critical points pof f|S, which includes

all local maxima and minima, satisfy that Df(p) = λDg(p) for some λ∈Rby the Lagrange

multiplier theorem. Since Df(x, y, z) = (y, x, 3z2), this means that y= 4λx3,x= 4λy3,

3z2= 4λz3. If λ= 0, this gives (x, y, z) = 0, but this is not in S, so to find critical points

of f|Swe may assume λ6= 0. Substituting yfrom the first equation into the second gives

x= 256λ4x9, so either x= 0 or 256λ4x8= 1, i.e. λx2=±1

4; it also gives y= (4λx2)x=±x,

so z4= 1 −2x4.

If x= 0, we have y= 0 as well, thus z=±1 on S, in which case by the third equation

3 = 4λ(±1)3, which has a solution λ∈R, thus (0,0,±1) are critical points of f|Sand

Document Summary

Unless otherwise indicated, you can use results covered in lecture and homework, provided they are clearly stated. If necessary, continue solutions on backs of pages. Note: work sheets are provided for your convenience, but will not be graded. Work on it only if you are done with the other problems! I understand and accept the provisions of the honor code (signed) 1(a) (3 points): find (with detailed proof!) det a, det b and det(ab) if. Solution: the determinant of a matrix c with entries cij is det c = p sn where the sum is over permutations of {1, . This means that each summand takes one entry from each column of c, and the entries corresponding to di erent columns must come from di erent rows. In particular, in the case of a there are only two non-zero summands when we take into account that the product vanishes if any factor vanishes.